AVALON: Automatic Value Assessment of News

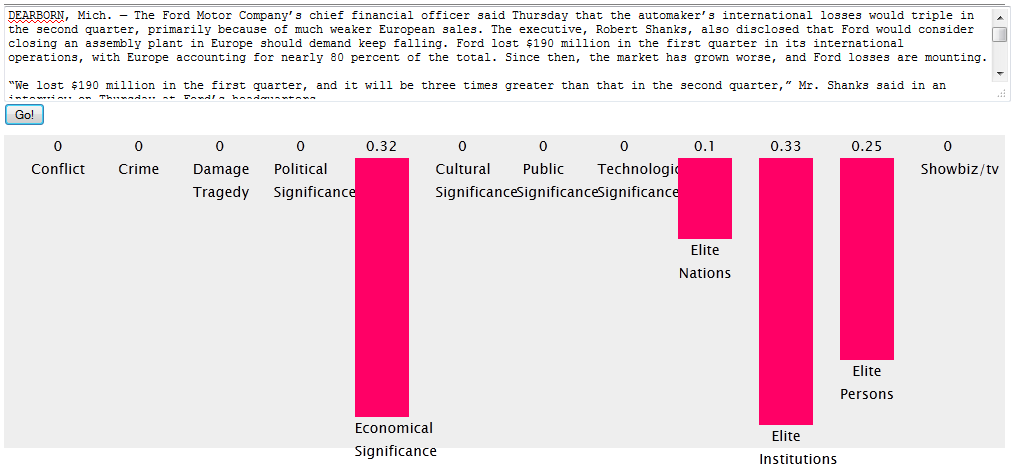

Due to the ever increasing flow of (digital) information in today's society, journalists struggle to manage and assess this abundance of data in terms of their newsworthiness. Although theoretical analyses and mechanisms exist to determine if something is worth publishing, applying these techniques requires substantial domain knowledge and know-how. Furthermore, the consumer's need for near-immediate reporting significantly limits the time for journalists to select and produce content. In this project, we propose an approach to automate the process of newsworthiness assessment, by applying recent Semantic Web technologies to verify theoretically determined news criteria. We implemented a proof-of-concept application that generates a newsworthiness profile for a user-specified news item. A preliminary evaluation by manual verification was performed, resulting in 83.93% precision and 66.07% recall. However, further evaluation by domain experts is needed.

For more information, we refer to the latest publication about this project:

Bringing Newsworthiness into the 21st Century

available at CEUR-WS

Do note that this application is still in its development phase, and plans to include more features in the near future.

Determining the Social Media Impact of News: is it possible?

To say that today's media landscape suffers from an information overload is putting it lightly. Nowadays, journalists are confronted with a tremendous amount of potentially newsworthy content, gathered from a vast array of sources. The problem is, they don't get more time or resources to filter through this content and come up with their own take on the news. As news becomes increasingly accessible to readers, these readers want high-quality news, and they want it almost instantly. Additionally, readers want the ability to comment upon this news content, and spread the word through social media. This last aspect is exactly what we at Ghent University - iMinds - Multimedia Lab aim to exploit to help the journalist select newsworthy content.

Our idea is to monitor the stream of information on social media such as Twitter, and compare it to the content of a news article. This way, we hope to give the content creator an idea of how much online "buzz" his or her topic is currently generating. However, multiple questions arise when trying to implement this:

- Which factors determine whether something has "high impact"?

- How will we access the social media streams in an on-demand and affordable (preferably free) way?

- How do we determine which social media content is relevant to the news article?

These are the challenges we aim to tackle in our research.

First, we must consider the basic elements of most currently available social media, and identify which features determine whether something or someone has a high impact. Most people will agree that the basic element of most social media is the micropost. This is true for Twitter, Facebook, Google+, etc. Furthermore, we pose that each micropost has two important aspects which determine its impact: its level of endorsement and the poster's level of influence. In the case of Twitter, endorsements are retweets, and influence is the number of followers a user has. For Facebook, endorsements correspond to 'likes' and 'shares' and a user's influence is his number of friends. These are the criteria we use in our metrics to determine the social media impact of a news article.

To access the social media streams, we chose to implement our own social media toolbox, which acts as a hub to the various publicly accessible social media APIs, which can be added in a modular fashion. This approach has its advantages and its disadvantages. The main advantage is that we are not dependent on rate limits when searching through the vast amount of microposts to determine which are relevant to our news content. However, to achieve this, the microposts need to be cached and indexed, and this process is subject to the storage and processing limitations of our servers. Therefore, we chose to only cache the microposts for a limited period in time, i.e. one week. For our use case, this is sufficient, as we are mostly interested in recent data. Other limitations include that our toolbox is dependent on which portion of the social media streams the official APIs allow us to access. For example, free access to the Twitter Streaming API is limited to 1% of all global tweets. This poses few problems when monitoring specific users, keywords or tags, but when trying to capture all activity, which our application aims at, this limitation is reached very soon. In other words, our toolbox does not function as a direct replacement for the official APIs, but thanks to its modularity, offers an interesting way to get at least an indication of the activity on various social media.

Once access to the micropost streams is acquired, an efficient way is needed to determine which microposts are relevant to our news article. After all, as Mitchell Kapor once put it: "Getting information off the Internet is like taking a drink from a fire hydrant". The same analogy holds true for filtering relevant content from a social media stream. The main challenge here is that we do not know in advance which topics and keyword to look for, and thus, cannot 'filter' the micropost stream as it comes in. Instead, we must make use of our cached micropost database, and efficiently query it for relevant content. But how do we compare a full text article, consisting of thousands of words to an enormous set of microposts, each only little over 100 characters long? For this purpose, we create a sparser representation of the news article, namely some descriptive keywords and/or topics, using a publicly available API such as AlchemyAPI. These keywords are then used to query the database of microposts, and select those that are relevant to the news article. Of course, the development of more advanced ways to do this, accompanied by a thorough evaluation, is the next step in our research.

The final step is to show everything to the user (the journalist). In our first proof-of-concept application, the journalist inputs the text of his or her article and receives a report, indicating the number of relevant microposts on Twitter, the average endorsement they received, and the average influence size of the users posting them. Naturally, this is only a first implementation. In future work, we aim to include more social media streams, more advanced relevance metrics, and a more in-depth report. Ideally, this report would include links to noteworthy microposts, be it by endorsement count or user influence.

If you would like to learn more about this research, we invite you to read our article "Towards Automatic Assessment of the Social Media Impact of News Content",

or attend our talk at SNOW 2013.

To follow the evolution of this project, we suggest keeping an eye on this page, or my Twitter account.